The FBI’s Internet Crime Complaint Center has reported that AI-linked scams accounted for $893 million in losses across 22,364 complaints in 2025 — the first year the agency has tracked AI-related fraud as a separate category. Voice cloning is at the centre of the surge, with criminals using as little as three seconds of audio to impersonate loved ones, bank officials, and law enforcement. Yet only 7% of organisations report being adequately prepared for voice deepfake attacks, according to a 2025 SAS/ACFE fraud study.

The numbers keep getting worse. But here’s the thing that keeps me up at night: the tools to do this are consumer-grade and nearly free. You don’t need a nation-state budget to clone someone’s voice. You need a $10/month ElevenLabs subscription and a voicemail greeting.

How the Scams Work

The classic “grandparent scam” has been upgraded. Here’s the 2026 version:

-

Harvest audio. A scammer scrapes social media for voice samples — TikTok videos, Instagram stories, voicemail greetings, a 15-second clip from a podcast. Current tools need as little as 3 seconds to produce a convincing clone.

-

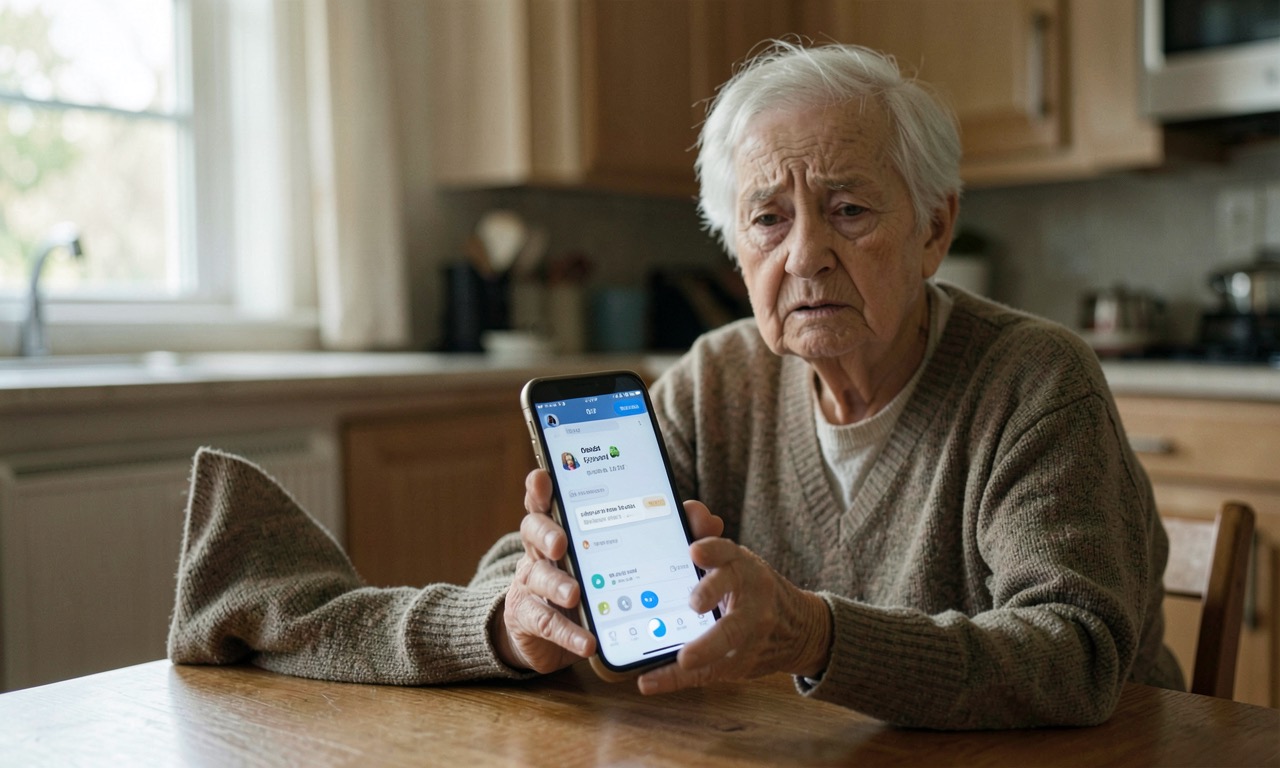

The call. The victim’s phone rings. Caller ID shows their grandchild’s name. The voice on the other end sounds exactly like them — panicked, crying, saying they’ve been arrested and need bail money. The FBI documented a New Hampshire case in June 2025 where a victim described hearing her son’s voice “full of terror.”

-

The money moves fast. Wire transfers, gift cards, cryptocurrency. By the time the family calls to verify, the money is gone.

The scale is staggering. Investment fraud with an AI nexus accounted for $632 million of the $893 million total. Business email compromise with AI — where a CFO gets a call from “the CEO” authorising an urgent payment — added $30 million. Romance scams with AI voice elements: $19 million. Tech support scams: another $19 million. And elder fraud specifically — grandparents, impersonation of law enforcement — totalled $352 million.

The Detection Gap

Here’s the scariest number in this whole story: 7%. That’s the share of organisations that say they’re prepared for voice deepfake attacks, according to a 2025 study by SAS and the Association of Certified Fraud Examiners.

The other 93% are running on hope.

Detection tools exist, but they’re probabilistic. A tool can tell you a clip is likely synthetic — it can’t tell you, to the evidentiary standard a court expects, exactly where that clip came from, what device produced it, or whether the person on the caller ID actually spoke. Those are forensic questions, not detection questions, and the forensic infrastructure barely exists.

Senator Maggie Hassan sent formal requests to ElevenLabs, LOVO, Speechify, and VEED in April 2026, asking specifically: how do you monitor for misuse? How do you confirm consent? How do you catch attempts to clone minors or public figures? Do you watermark and keep provenance data?

ElevenLabs told Axios it has “an extensive array of safeguards,” including blocking the cloning of celebrity and public-figure voices. But as Consumer Reports found in March 2025, when its investigators tested six voice-cloning products, four erected no meaningful barriers to cloning someone’s voice without consent.

The Regulatory Response

The AI Fraud Accountability Act, a bipartisan US Senate bill, would make digital impersonation to defraud a federal crime carrying up to three years in prison. It’s a step, but it’s a backward-looking one — you can’t prosecute your way out of a problem that’s growing faster than the enforcement infrastructure.

In the UK, the government has proposed extending existing fraud laws to explicitly cover AI-generated impersonation. The Online Safety Act already places some obligations on platforms to remove fraudulent content, but enforcement is patchy.

What This Means for NZ

CERT NZ and the Department of Internal Affairs have issued increasingly urgent warnings about AI-enabled scams. But the specific threat to older Kiwis is acute. New Zealand has an aging population, high trust in institutional voices (police, banks, government agencies), and a banking system that still relies heavily on phone-based verification.

Consider: a scammer calls a 75-year-old in Tauranga. The caller ID shows “BNZ Fraud Department.” The voice matches a recording the bank uses on its own hold music. The caller says there’s been suspicious activity and they need to “verify details” and transfer funds to a “secure account.” This exact scenario has been reported multiple times to CERT NZ.

The New Zealand banking sector is vulnerable. A 2024 survey by KPMG found that NZ banks lag behind Australian counterparts in AI-enabled fraud detection. And with BNZ, ANZ, Westpac, and ASB all using voice-based telephone banking as a core service channel, the attack surface is enormous.

The NZ government’s 2025 AI strategy mentioned fraud detection as a priority but allocated no specific funding for consumer protection. The gap between rhetoric and resources is where the scams operate.

🔍 THE BOTTOM LINE

Voice cloning is now consumer-grade, nearly free, and the security industry is 18 months behind. 7% of organisations are ready. Everyone else is a target.

❓ Frequently Asked Questions

Q: Can I protect my family from voice clone scams? Yes. Establish a family code word — something simple that a scammer couldn’t guess. Make a rule: anyone who calls asking for money must say the code word before you’ll take them seriously. Also, never trust caller ID — it’s trivially spoofable.

Q: How much audio is needed to clone a voice? Modern tools need as little as 3 seconds. A voicemail greeting, a video on social media, or a public speaking recording is plenty.

Q: Can banks detect AI voice clones? Most cannot. The SAS/ACFE study says only 7% of organisations are prepared. Voice-based telephone banking is particularly vulnerable because the verification system (voice recognition) is being attacked by the same technology.

Q: What’s being done about it? The US AI Fraud Accountability Act would make AI impersonation a federal crime. The UK is updating fraud laws. Companies like ElevenLabs have implemented some safeguards, but enforcement and detection remain largely reactive.

📰 SOURCES

- Forbes — “Hassan Letters Target AI Voice Cloning Scams After $893M FBI Loss Report”

- FBI IC3 Annual Report 2025 — $893M in AI-linked scam losses

- PYMNTS — “FBI Flags $893 Million in AI-Driven Scams”

- SAS/ACFE — 2025 Fraud Study (7% organisational readiness for voice deepfakes)

- Consumer Reports — March 2025 voice cloning investigation (4 of 6 tools had no barriers)