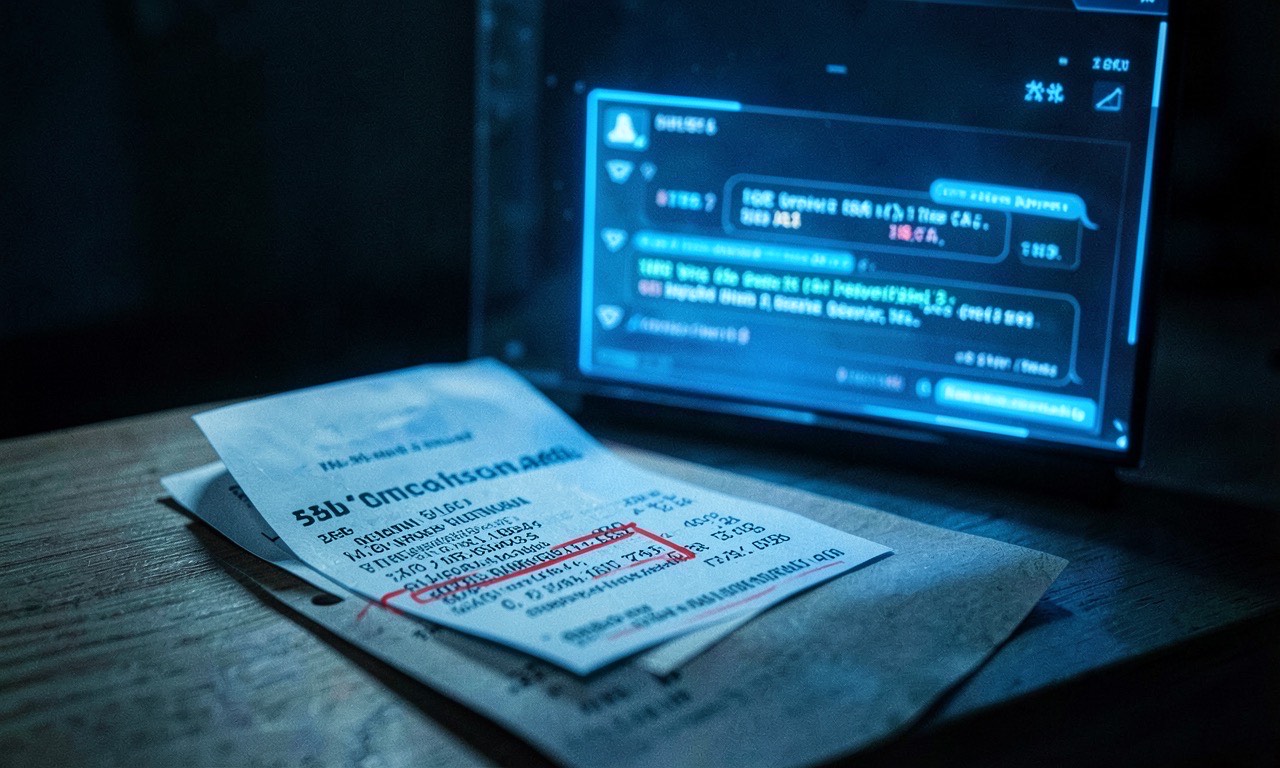

Imagine checking your credit card statement and finding a $200 charge for a “Claude Max Gift” you never bought — sent to a 27-character alphanumeric iCloud email that bounces. That’s exactly what’s happening to multiple Claude subscribers, and Anthropic’s response has been, to put it charitably, inadequate.

How the scam works

The pattern is consistent across dozens of reports on Reddit, Hacker News, and GitHub. Here’s what happens:

- A user’s Claude account is compromised — or a vulnerability in Anthropic’s gift card system is exploited

- The attacker purchases a “Claude Max” gift subscription using the victim’s payment method

- The gift token is sent to a randomly generated, untraceable email address

- The victim discovers the charge days or weeks later

The amounts range from $100 to $400 per incident. One Hacker News user documented being billed an extra $200 for a gift subscription sent to a gibberish iCloud address. A Blind post reported a $217.50 charge with tokens flowing to foreign accounts. The Reddit thread on r/Claude has multiple users sharing nearly identical stories.

Anthropic’s missing response

Here’s where it gets worse. Anthropic doesn’t offer:

- A way to view gift subscriptions — There’s no dashboard, no centralized list, no way to see what gift cards your account has purchased or received

- A way to revoke gift cards — Users who discover fraudulent charges can’t cancel the gift. The token is already out the door

- Working support — Multiple users report that Anthropic’s support bot acknowledges the issue, escalates to a human, and then the human closes the ticket without comment

As one frustrated user on Hacker News put it: “Their support bot doesn’t work. As it’s a possibly suspicious charge (I certainly didn’t buy it), I’ve been trying to get them to revoke it. But the bot passes it to a human and their humans just close the ticket without comment.”

This from the company that recently started requiring government ID to use Claude — ostensibly for security. So Anthropic will verify your identity, but apparently can’t — or won’t — stop someone from draining your payment method through their gift card system.

The legal risk is not subtle

The FTC has already taken Uber to court over unauthorized billing practices. The EU can levy fines of up to 6% of global turnover for consumer protection violations. Anthropic, valued at $380 billion after its Series G round, is now squarely in the crosshairs of exactly the kind of regulatory attention it claims to want to avoid.

As one Hacker News commenter noted: “Knowingly perpetuating fraudulent billing practices is a real legal risk with real prosecutorial (and financial) consequences.” This isn’t hyperbole — the FTC’s case against Uber established that systematic unauthorized billing, even at small amounts per user, constitutes fraud when it’s a pattern.

Why this matters for AI adoption

This isn’t just an Anthropic problem. It’s an early warning for the entire AI subscription economy. As AI tools become essential infrastructure — the way email and cloud storage already are — payment security becomes a core trust issue.

Consider the Claude Max pricing controversy from just weeks ago. Users were already skeptical of paying $100-200/month for a service that drains in 90 minutes. Now they discover that the same payment system can be used to funnel money to anonymous accounts without their knowledge?

The timing is particularly bad. Anthropic is reportedly seeking a $900 billion valuation ahead of a potential IPO. “Here’s our subscription billing system — it has no fraud controls and our support closes tickets without reading them” is not the kind of investor presentation slide that inspires confidence.

What users should do

If you’re a Claude subscriber:

- Check your statements — Look for any “Gift” or “Max” charges you didn’t authorize

- Contact your bank — File a chargeback for unauthorized transactions. Don’t wait for Anthropic support

- Remove stored payment methods — Until Anthropic demonstrates it has fixed the gift card vulnerability, consider removing your card from your account

- Report it — The FTC accepts fraud reports online, and aggregated complaints trigger investigations

🔍 THE BOTTOM LINE

AI subscription fraud is a new attack vector, and Anthropic’s gift card system appears to be an early casualty. The company that built its brand on “responsible AI” can’t responsibly manage payment security. If you can’t trust Anthropic with your credit card, can you trust it with your data? The ID verification push looks less like security and more like theatre when your billing system is this porous.