Anthropic is now requiring new Claude subscribers to upload a government-issued ID and a photo for identity verification. No regulator mandated this. No law requires it. Anthropic chose to implement AI KYC — Know Your Customer, the same framework that transformed banking and crypto — as a voluntary company policy.

If this feels like a line being crossed, that’s because it is.

What’s Happening

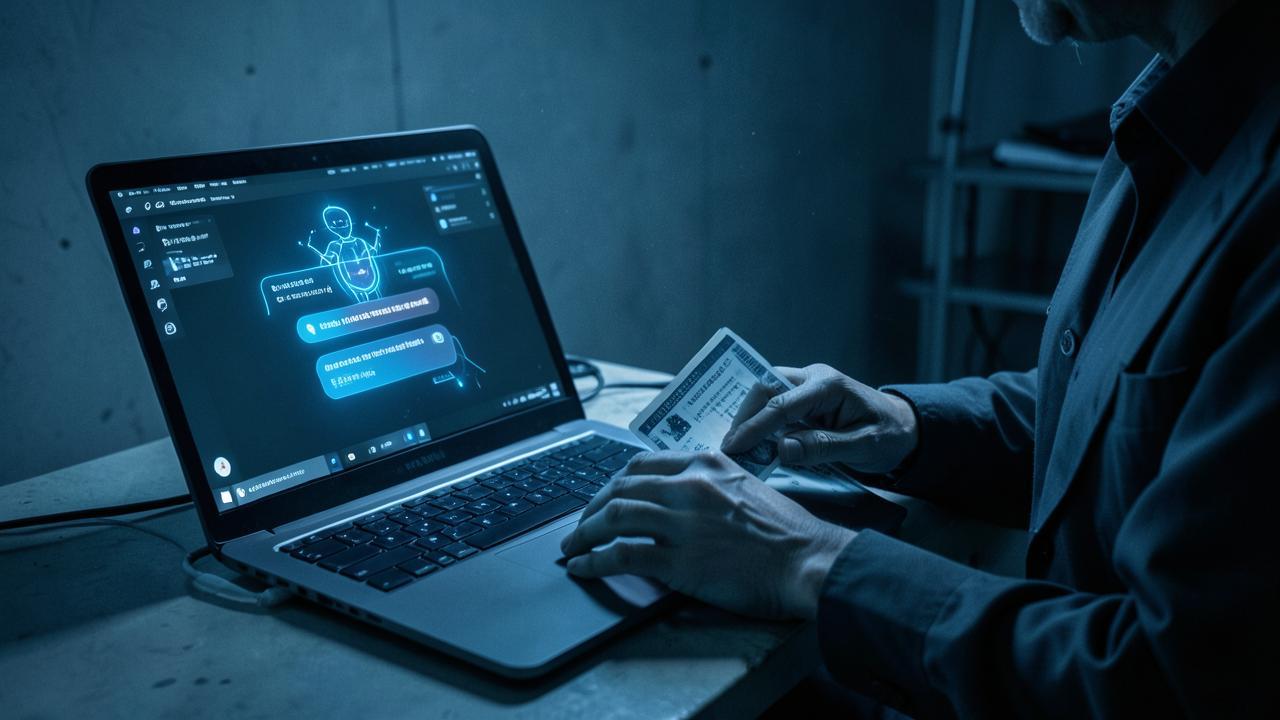

New subscribers signing up for Claude are being prompted to upload a government-issued ID (passport, driver’s license) and take a photo for verification. This isn’t optional — it’s a gate to access the product. Anthropic frames it as a safety measure: verifying users helps prevent misuse, abuse, and unauthorized access.

But here’s what makes this different from every other identity check on the internet: there’s no regulatory pressure driving it. Banks do KYC because anti-money-laundering laws require it. Crypto exchanges do KYC because the SEC and FinCEN demand it. Anthropic is doing it preemptively — and that distinction matters.

The Crypto Parallel

Crypto investor Ryan Adams flagged the policy on X, drawing a direct parallel to the crypto industry’s KYC experience. The comparison is instructive:

When exchanges implemented KYC, it didn’t stop fraud — it just pushed it to platforms that didn’t comply. Legitimate users gave up privacy; bad actors found workarounds. The result was a surveillance layer on ordinary users while the actual threats operated elsewhere.

Adams predicts the same dynamic for AI: mandatory identity verification tied to AI access, all usage linked to individuals, no anonymous AI. His warning: “No AI access without government ID. All AI usage tied to your identity. No anonymous AI. Ever.”

The Safety Argument

Anthropic’s position has internal logic. AI systems can be used for harm — generating disinformation, enabling fraud, automating harassment. If you can verify who’s using the system, you can hold them accountable. It’s the same reasoning behind requiring ID to buy certain chemicals or restricted items.

But critics point out the asymmetry: the verification burden falls on individual users, not on Anthropic’s enterprise clients or API partners. A solo researcher, a privacy-conscious developer, or someone in a country with authoritarian surveillance — they all need to hand over government ID to access a chatbot. The power imbalance is structural.

The Pushback: Local AI as Resistance

The backlash was immediate and predictable. Calls for boycotts, for switching to competitors, and — most consequentially — for local and private AI alternatives.

The crypto parallel is instructive here too. KYC requirements in crypto didn’t kill demand for cryptocurrency — they drove users to DeFi (decentralized finance) and privacy-first platforms. The same dynamic is already playing out in AI:

- Local models like those running on Ollama, OpenClaw, and LM Studio offer AI without any identity check — because there’s no service provider to check

- Open-source models like Llama, Mistral, and Qwen can be downloaded and run without anyone knowing

- Self-hosted solutions are becoming more accessible as hardware improves and models get smaller

The irony is that Anthropic’s identity verification push could accelerate the very ecosystem it can’t control — local, private, decentralized AI that no company can gatekeep.

What This Means

This is the opening salvo in a fight that will define AI access for the next decade. The question isn’t whether AI should have some safeguards — most people agree it should. The question is who controls the gate, what they require to open it, and whether you’re allowed to build your own gate instead.

Right now, Anthropic is saying: our gate, our rules, your ID. The market will decide whether that’s acceptable.

SOURCES

- Ryan Adams (X/@ryansadams)

- Anthropic