Anthropic is discussing its frontier AI model Mythos with the Trump administration, the company’s co-founder confirmed on April 13 — even as a separate dispute with the Pentagon over military guardrails has landed the AI lab on a national security blacklist.

The talks mark a new and unprecedented phase in the relationship between frontier AI companies and the federal government. This isn’t regulatory posturing. This is direct, bilateral engagement about what the next generation of AI models can do — and who gets to know about it before the rest of the world.

What Happened

Speaking at the Semafor World Economy event in Washington, Anthropic co-founder Jack Clark confirmed that the company is in active discussions with the Trump administration about Mythos, its most powerful model yet.

“We have a narrow contracting dispute, but I don’t want that to get in the way of the fact that we care deeply about national security,” Clark said. “Our position is the government has to know about this stuff. So absolutely, we’re talking to them about Mythos, and we’ll talk to them about the next models as well.”

The nature and details of the talks — including which agencies are involved — were not immediately clear.

This is the first confirmed direct White House-AI lab collaboration on model governance since Anthropic restricted Mythos Preview to just 40+ cybersecurity firms, citing safety concerns about broad public release.

The Mythos Context

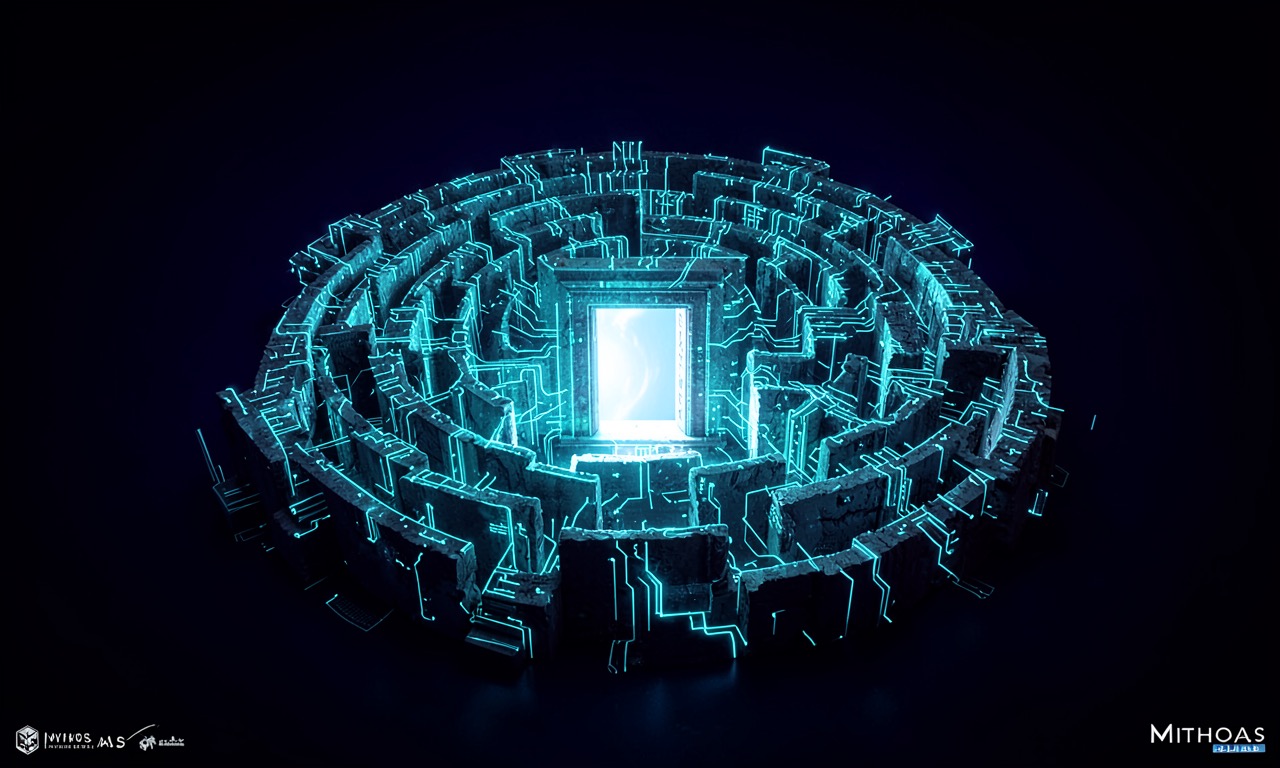

Mythos was announced on April 7, 2026, as Anthropic’s “most capable yet for coding and agentic tasks” — language that barely captures what the model can actually do.

Mythos’s ability to identify cybersecurity vulnerabilities and devise ways to exploit them has been described by experts as potentially unprecedented. During Project Glasswing, Mythos Preview found thousands of previously unknown security vulnerabilities, including a 27-year-old bug in OpenBSD and flaws in every major operating system and web browser.

That capability — finding and exploiting vulnerabilities at scale — is precisely what makes government engagement so sensitive. The same model that can defend critical infrastructure can also, in the wrong hands, attack it. Anthropic’s decision to restrict access to a vetted group of cybersecurity firms was an acknowledgment of that dual-use reality.

The Pentagon Blacklist Problem

The talks happen against a backdrop of escalating conflict between Anthropic and the Pentagon.

Last month, the Pentagon designated Anthropic as a supply-chain risk — a classification that bars the military and its contractors from using the company’s AI tools. The designation followed a contract dispute over guardrails: Anthropic refused to deploy its models for the Pentagon without restrictions on how the military could use them.

A Washington, D.C. federal appeals court last week declined to block the blacklisting for now, a win for the Trump administration. A separate appeals court had previously come to the opposite conclusion in another Anthropic legal challenge, setting up a circuit split that could eventually reach the Supreme Court.

The blacklisting means that even as Anthropic is talking to the administration about future models, the Pentagon — the agency most likely to be a major customer for powerful, secure AI — is formally prohibited from doing business with the company.

Why This Matters

Three things make this moment significant:

1. The first confirmed model-level government engagement

AI companies have talked to governments about policy, regulation, and voluntary commitments. But direct discussions about a specific frontier model — its capabilities, its deployment, its governance — before public release? That’s new. And it creates a precedent that other frontier labs (OpenAI, Google DeepMind, xAI) will face pressure to follow.

2. The safety-sovereignty tension

Anthropic built its brand on AI safety. It restricted Mythos because broad release was too dangerous. But if the company shares model details with the government while restricting public access, it raises a fundamental question: who gets to know what AI can do? Is safety served by giving governments early access, or by keeping capabilities private until responsible deployment is possible?

3. The dual-track reality

Anthropic is simultaneously being blacklisted by the Pentagon and briefing the White House on its next model. That contradiction isn’t a bug — it’s a feature of the current regulatory landscape. There is no unified federal position on AI. The Pentagon’s supply-chain designation and the White House’s engagement with Anthropic are happening in parallel, driven by different institutional imperatives.

What Comes Next

The circuit split on Anthropic’s legal challenges almost guarantees Supreme Court attention. The Pentagon blacklisting will either be upheld or struck down — and either outcome reshapes the relationship between AI companies and national security agencies.

Meanwhile, Mythos remains in restricted preview. Anthropic has shown that it’s willing to limit access to its most powerful model on safety grounds. But Clark’s public confirmation of government talks signals that the company sees national security engagement as a separate and necessary track — one that runs alongside, and sometimes in tension with, its safety commitments.

The companies that built the most powerful technology in human history are now negotiating directly with the government about who gets to know what it can do. Whether that produces responsible governance or regulatory capture depends entirely on the details — details that, for now, remain undisclosed.

SOURCES

- Reuters — “Anthropic talking to the Trump administration about its next AI model, co-founder says,” April 13, 2026

- Anthropic — Statement from Dario Amodei on discussions with the Department of War

- CNBC — “Anthropic limits rollout of Mythos AI model over cyberattack fears”

- TechCrunch — “Anthropic debuts preview of powerful new AI model Mythos in new cybersecurity initiative”

- CBS News — “Anthropic CEO: We’re trying to deescalate Pentagon AI standoff”