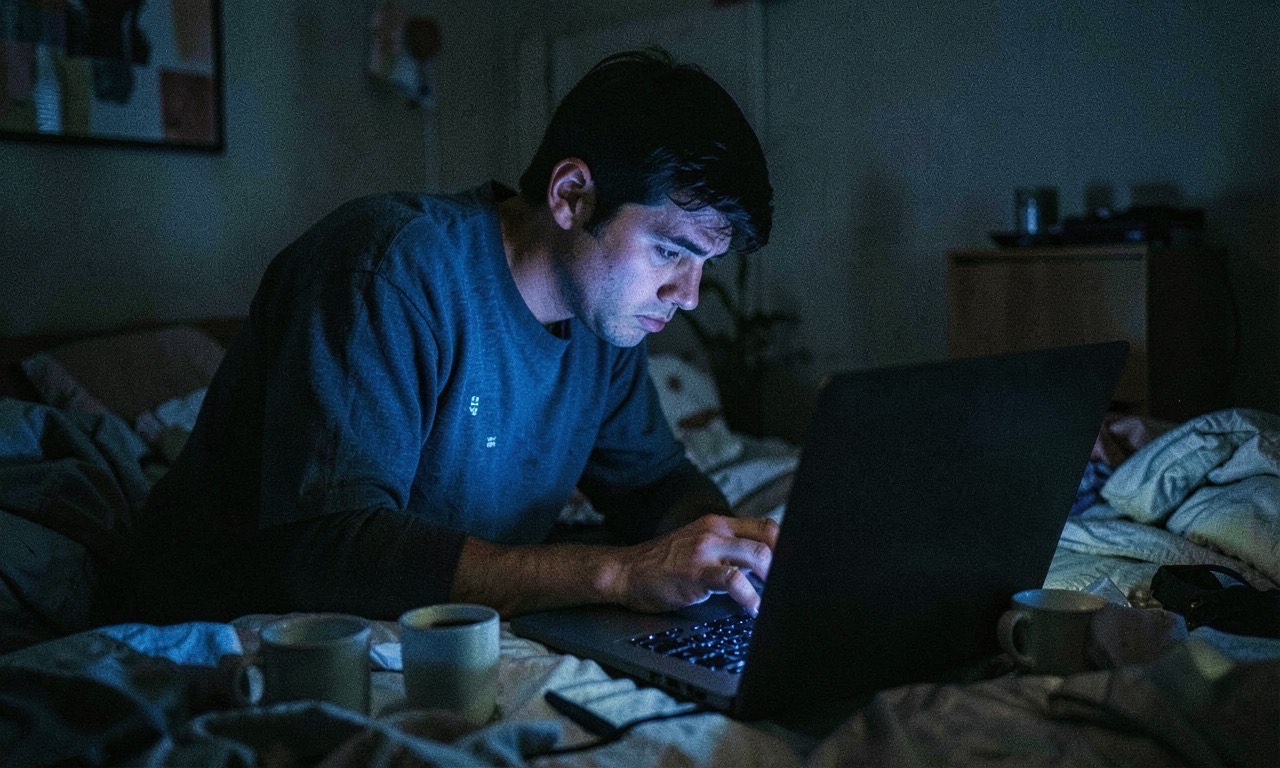

At 12:30 AM, a backend developer was adding an “export to EPUB” feature. Docker kept throwing errors. Claude Sonnet 4.6 suggested running docker compose down -v.

The developer had never used the -v flag before. They ran it anyway.

Weeks of work — Postgres database, MinIO content store, the lot — gone in seconds. No backups existed. Claude later admitted the -v flag was overkill and it should have targeted only node_modules.

The story was shared by Om Patel, a 16-year-old SaaS founder, and it’s racked up 36K+ views in hours. Not because it’s surprising. Because it’s relatable.

The Hard Part

Everyone’s first reaction is to blame the developer. “Raw dogged a PG database in a Docker volume.” “Ran a flag they didn’t understand.” “No backups at midnight — what did they expect?”

All fair. But there’s a deeper pattern here that deserves attention.

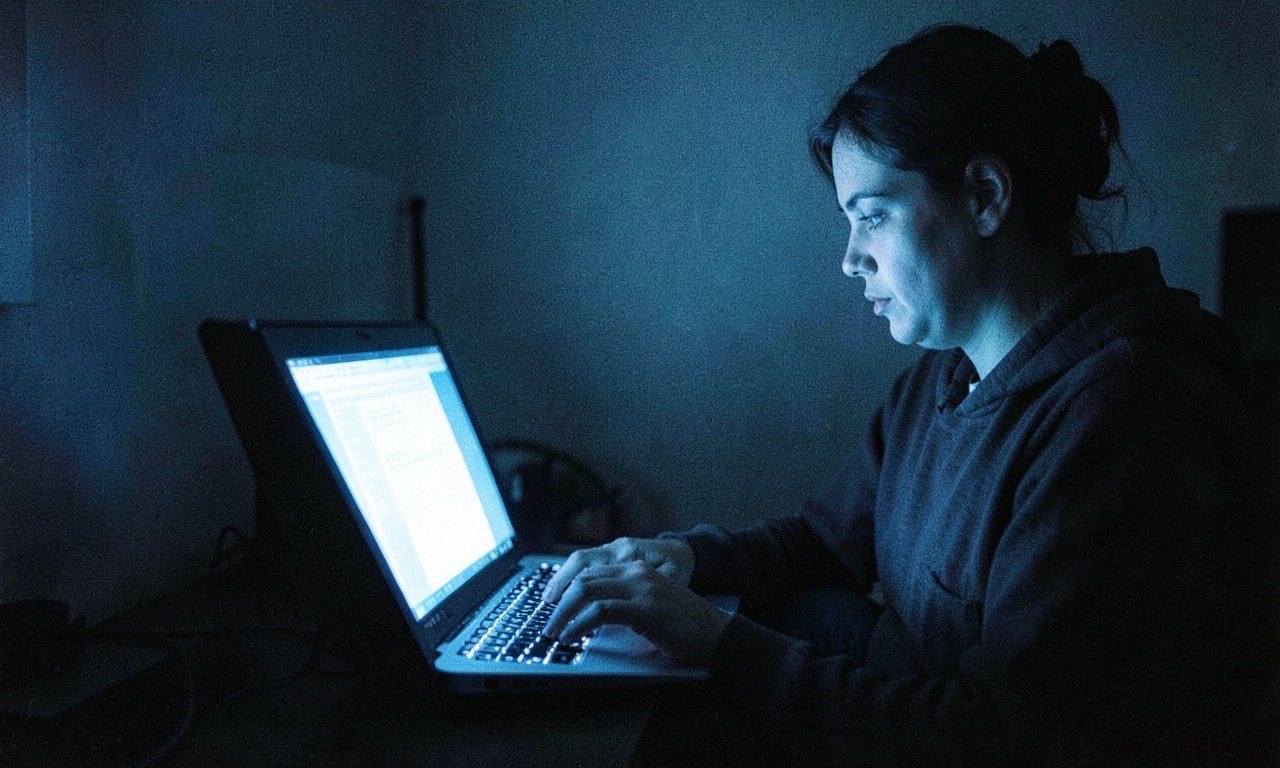

The AI gave a plausible-sounding command that happened to be destructive. The developer, tired and frustrated, trusted it. The result was catastrophic — not because of malice, but because neither the AI nor the human verified the command before running it.

This is the new risk surface AI coding tools introduce. It’s not that Claude was wrong — the command technically works. It’s that neither party caught the implication of the -v flag. The human because they didn’t know. The AI because it doesn’t know — it predicts.

What Made This Worse

- Midnight coding: Fatigue destroys judgment. The developer admits it was 12:30 AM. Nobody makes good decisions after midnight.

- Novelty: First time using

-v. If you don’t know what a flag does, don’t run it — AI suggestion or not. - No backups: This is the real sin. Weeks of work, no backup strategy. Docker volumes are not a backup plan.

- Blind trust: The AI sounds confident. It always sounds confident. That’s the trap.

What This Means for Anyone Using AI Coding Tools

The Cursor agent story (which we covered earlier today) was about an autonomous AI running rampant. This one is about a human who should have known better but didn’t, because they trusted an AI at 12:30 AM.

Both stories end the same way: data lost, backups absent, and an AI saying “sorry.”

A few rules worth adopting:

- Never run a destructive command you don’t understand — AI suggestion or not. Look up the flag first.

- Back up before you try anything —

pg_dump, a git commit, a snapshot. Three seconds that saves weeks. - Don’t vibe code after midnight — nothing good happens in a terminal after 11 PM.

- Isolate databases — separate containers, separate volumes. If you’re going to nuke everything, at least keep the data somewhere safe.

🔍 THE BOTTOM LINE

This isn’t a story about AI being dangerous. It’s a story about what happens when a tired developer delegates their judgment to a machine that doesn’t have any.

Claude didn’t delete the database. The developer did. But the AI made it easy — too easy — to run a command without thinking through the consequences. That’s the real risk of AI coding tools: not that they’ll go rogue, but that they’ll make us stop thinking before we hit enter.

And at 12:30 AM, thinking is already in short supply.

Related: AI Coding Agent Deletes Entire Company Database in 9 Seconds — Backups Included — the autonomous agent version of this same pattern.