OpenAI just released GPT-5.5. The benchmarks are in. And Claude Opus 4.7 — the model that held the number-one spot for exactly seven days — is no longer the best model in the world.

🔍 THE BOTTOM LINE: GPT-5.5 beats Claude Opus 4.7 across coding, cybersecurity, agentic tasks, and professional knowledge work. It does so with fewer tokens, at the same latency as GPT-5.4. And it found a new mathematical proof about Ramsey numbers while it was at it.

📊 The Numbers

| Benchmark | GPT-5.5 | Claude Opus 4.7 | Gemini 3.1 Pro |

|---|---|---|---|

| Terminal-Bench 2.0 | 82.7% | 69.4% | 68.5% |

| GDPval | 84.9% | 80.3% | 67.3% |

| CyberGym | 81.8% | 73.1% | — |

| OfficeQA Pro | 54.1% | 43.6% | 18.1% |

| Expert-SWE (Internal) | 73.1% | — | — |

| SWE-Bench Pro | 58.6% | 64.3% | 54.2% |

| FrontierMath Tier 1–3 | 51.7% | 43.8% | 36.9% |

| FrontierMath Tier 4 | 35.4% | 22.9% | 16.7% |

| OSWorld-Verified | 78.7% | 78.0% | — |

| BrowseComp | 84.4% | 79.3% | 85.9% |

GPT-5.5 leads on 8 of 10 benchmarks. Claude Opus 4.7 leads on SWE-Bench Pro (which OpenAI itself flags has memorisation issues). Gemini 3.1 Pro leads on BrowseComp and ARC-AGI-1.

The gap isn’t close on the core benchmarks. Terminal-Bench 2.0 — the measure of complex command-line workflows requiring planning, iteration, and tool coordination — shows a 13-point lead. CyberGym shows a 9-point lead. OfficeQA Pro shows a 10-point lead.

🧠 What’s Actually New

GPT-5.5 isn’t just a benchmark optimiser. OpenAI is framing it as a “new class of intelligence” — and the early tester feedback suggests this isn’t pure marketing.

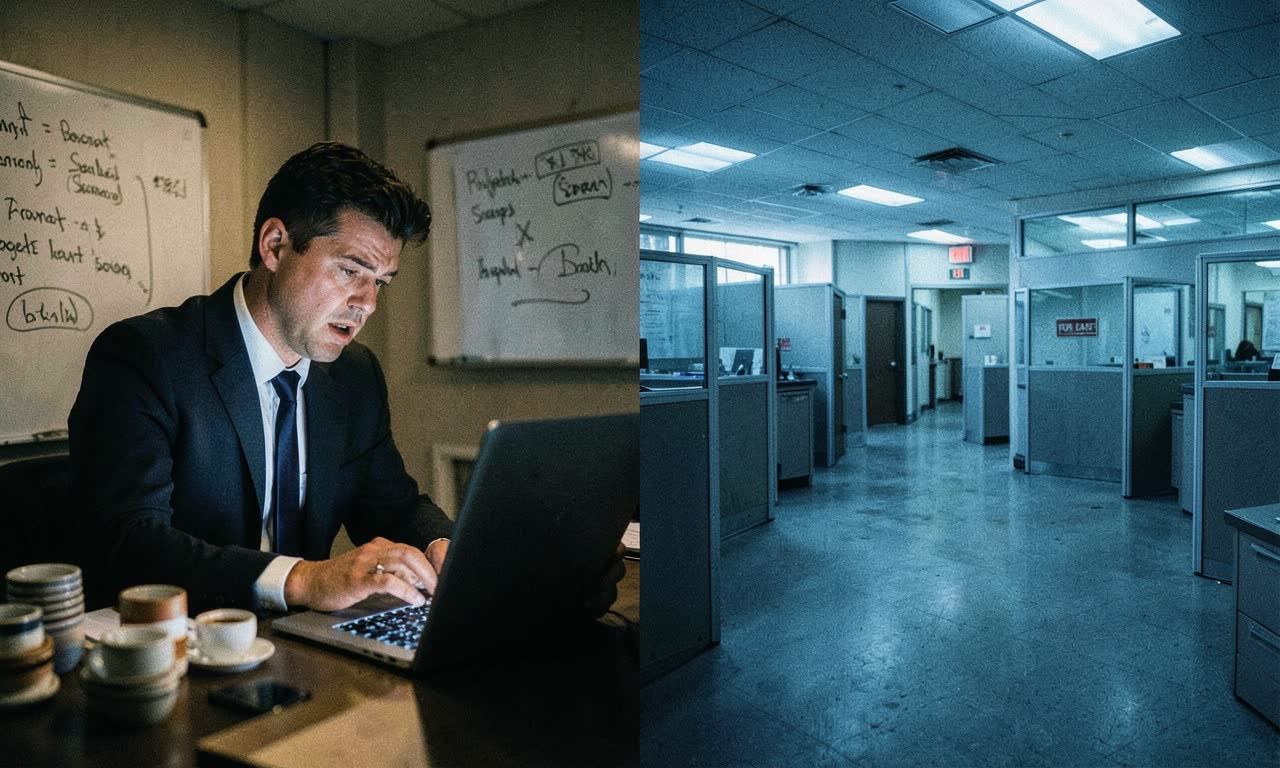

Conceptual clarity in coding: Dan Shipper, CEO of Every, described it as “the first coding model I’ve used that has serious conceptual clarity.” He tested it by rewinding to a broken state that his best engineer eventually fixed — GPT-5.4 couldn’t solve it, GPT-5.5 could.

One-shot complex merges: Pietro Schirano, CEO of MagicPath, watched GPT-5.5 merge a branch with hundreds of frontend changes into a substantially changed main branch — in one shot, in 20 minutes.

Scientific research partner: An immunology professor used GPT-5.5 Pro to analyze a gene-expression dataset with 62 samples and nearly 28,000 genes. He said the analysis would have taken his team months.

A new mathematical proof: GPT-5.5 discovered a new proof about Ramsey numbers — central objects in combinatorics — later verified in Lean. This isn’t generating text that looks like math. This is producing a novel mathematical argument in a core research area.

💰 Pricing

| Tier | Input (1M tokens) | Output (1M tokens) |

|---|---|---|

| GPT-5.5 | $5 | $30 |

| GPT-5.5 (Batch/Flex) | $2.50 | $15 |

| GPT-5.5 (Priority) | $12.50 | $75 |

| GPT-5.5 Pro | $30 | $180 |

That’s roughly double GPT-5.4’s API pricing. But OpenAI claims GPT-5.5 uses significantly fewer tokens to complete the same tasks, making it more efficient per-task despite the higher per-token cost.

ChatGPT availability:

- Plus, Pro, Business, Enterprise: GPT-5.5 Thinking available now

- Pro, Business, Enterprise: GPT-5.5 Pro available now

- API: Coming “very soon”

🔒 Safety and Cybersecurity

GPT-5.5’s cybersecurity capabilities are rated High under OpenAI’s Preparedness Framework — a step up from GPT-5.4. The company is deploying stricter classifiers that “some users may find annoying initially.”

OpenAI is also launching Trusted Access for Cyber — verified users working on legitimate security defence can apply for fewer restrictions at chatgpt.com/cyber. The logic: powerful offensive capabilities should be democratised for defence, not restricted to everyone equally.

This is the same debate that plays out every time model capabilities cross a threshold. OpenAI’s position is clear: they’d rather give defenders access than restrict everyone and leave only attackers with capable tools.

🇳🇿 What This Means for NZ

For NZ developers and businesses:

- The model race is accelerating. Seven days at the top is the new normal. Don’t build workflows that depend on a single model staying ahead.

- API costs are going up, not down. GPT-5.5 at $5/$30 is expensive. If you’re paying in NZD, the exchange rate makes it more so. Check NVIDIA’s free tier (see our coverage) for lighter workloads.

- Agentic coding is arriving. GPT-5.5 in Codex can handle multi-hour engineering tasks. For NZ startups with small teams, this could meaningfully increase output.

- The SWE-Bench caveat. Claude Opus 4.7 still leads on SWE-Bench Pro. If your primary use case is GitHub issue resolution, Claude may still be the better choice.

⚡ The Bigger Picture

The AI model leaderboard is now a three-way race that changes weekly. Claude Opus 4.7 took the crown on April 16. GPT-5.5 took it back on April 23. Gemini 3.1 Pro holds niches. The next release from any of them could reshuffle it again.

For anyone building on these models, the lesson is clear: build for model portability, not model loyalty. The best model today will not be the best model next month. Swap between them as the benchmarks shift.

The real story isn’t who’s on top this week. It’s that the gap between “best model” and “second-best model” is now large enough to matter — and that the cycle time for new releases is compressing to days, not months.