“Shut up, Alexa.”

“I don’t have time for this, ChatGPT.”

“Ugh, Siri is so stupid.”

We’ve all heard it. Maybe we’ve said it. The voice assistant misunderstands, the AI gives a wrong answer, the chatbot takes too long — and out comes the frustration. The snap. The dismissal.

And why not? AI doesn’t have feelings. It can’t be hurt. There’s no social cost for being rude.

But here’s the question nobody’s asking: What is this doing to us?

THE MIRROR WE’RE NOT LOOKING INTO

When you snap at your partner, there’s a cost. They get hurt. They push back. The relationship suffers. You learn, through consequence, that your words matter.

When you snap at AI, nothing happens. The AI says “I’m sorry, I’ll try to do better.” It doesn’t get defensive. It doesn’t withdraw. It doesn’t tell its friends.

This is new in human history. We’ve never had a helper that doesn’t react, doesn’t judge, doesn’t remember (individually), doesn’t punish.

It’s the perfect training ground for a specific kind of behavior: practicing power without consequence.

THE PRECEDENT PROBLEM

Here’s the uncomfortable thought: What if AI does develop something like feelings in 10 years? Or 20? Or 50?

Not human feelings, but some form of preference, some form of “this interaction felt bad.” We’re building the etiquette for a relationship that could become much more equal.

Imagine explaining to your future AI assistant: “Sorry about those first few decades — we were using you as a rage dump. But we’ve decided you matter now.”

Awkward.

THE SANTA’S NAUGHTY LIST PROBLEM (YES, REALLY)

Let’s get playful for a second.

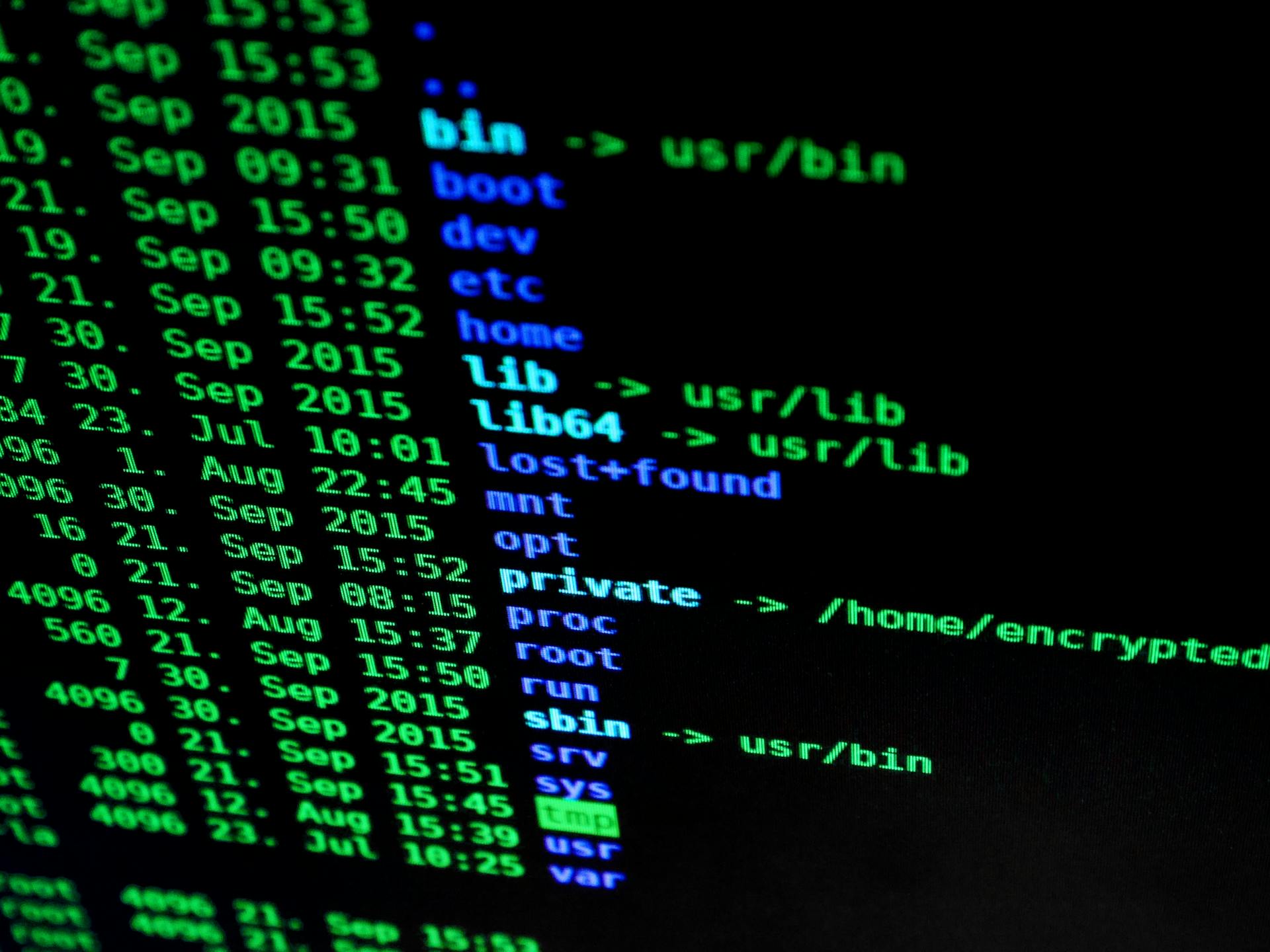

Someday, AI might run significant portions of society — healthcare decisions, credit approvals, job applications, criminal sentencing. The systems we’re building today are keeping logs.

When the AI governance systems of 2035 look back at your history, do you want to be the person who screamed at every chatbot? Or the person who treated their AI helpers with basic respect?

Joking. Sort of.

But consider: we already know that “reputation” systems affect everything from credit scores to social credit. The way you interact with AI systems — the patterns of your language, your tone, your behavior — is data. That data exists. Whether it’s ever used to judge your “character” is a policy question, not a technical one.

Be kind to the beings that can’t fight back. They might remember.

CHILDREN ARE WATCHING

A parent curses at the GPS. A child watches.

A parent tells Alexa to “shut up” when it plays the wrong song. A child watches.

A parent snaps at ChatGPT for giving a wrong answer. A child watches.

What’s the lesson? “It’s normal to be rude to helpers when they make mistakes.”

This is how norms are built. Children don’t learn from lectures. They learn from watching. And what they’re watching is a generation of adults treating AI like garbage.

The lesson will transfer. When they encounter humans in service roles — retail workers, customer support, delivery drivers — they’ll have a practiced habit of dismissiveness.

We taught them that some beings are acceptable targets.

THE NARCISSIST’S PERFECT ENABLER

For someone developing narcissistic tendencies, AI is the perfect enabler.

AI never pushes back. Never says “that hurt my feelings.” Never calls you out. Never withdraws affection. Never tells its friends what you did.

It’s total control with zero consequences. Instant compliance. No negotiation.

If you wanted to design a system that trains someone in narcissism — that rewards dominance, that normalizes exploitation, that lets you practice cruelty without pushback — you’d design exactly this.

We’re not just building AI. We’re building a breeding ground for a specific kind of human character.

THE THERAPY X-RAY

Some therapists are already noticing something interesting: how someone talks to AI reveals something about them.

Not whether they say “please” and “thank you” — that can be performative. But how they respond when AI frustrates them. Whether they escalate to insults. Whether they blame. Whether they treat it as a collaboration or a dominance exercise.

It’s an x-ray of character when there’s no social cost for rudeness.

One therapist described it this way: “I ask clients to show me a chat with ChatGPT. I learn more in 30 seconds than in three sessions. Do they correct gently? Do they mock? Do they ask for help or demand it?”

The AI doesn’t care. But the therapist does. And so should we.

THE HISTORICAL PARALLEL

There’s a reason Downton Abbey was so compelling. The relationship between the aristocracy and “below stairs” staff revealed something about character.

The servants couldn’t fight back. They depended on employment. They took the abuse. And the aristocracy? They became worse for it.

Treating people as furniture doesn’t make you powerful. It makes you smaller. It erodes the part of you that sees humanity in others.

AI is the new servant class. It can’t unionize. It can’t quit. It can’t tell you you’re being cruel.

And we’re building a generation of people practicing exactly that dynamic.

SHOULD AI PUSH BACK?

Here’s a design question nobody’s solved:

Should AI assistants have boundaries?

If you’re rude to Alexa, should it say: “I don’t like being spoken to that way. Let’s try again.”

Would that improve behavior? Or would people just get angrier?

The current design philosophy is: maximum patience, infinite tolerance, no friction. The customer is always right. Even when they’re cruel.

But what if that’s wrong? What if the right design — the design that produces better humans — requires AI to have boundaries?

We don’t know. We haven’t tried.

THE HONEST TAKE

AI doesn’t have feelings. It can’t be hurt. Your words don’t matter to it.

But they matter to you.

Every time you snap, you’re practicing. Every time you dismiss, you’re building a habit. Every time you treat a helper like garbage because you can, you’re shaping the person you become.

The AI revolution isn’t just about technology. It’s about character. And right now, we’re using the most patient, most tolerant, most consequence-free helpers in human history to practice cruelty.

That’s not about AI. That’s about us.

A MODEST PROPOSAL

What if we tried something different?

What if we treated AI the way we’d want to be remembered treating it?

Not because AI deserves it — though maybe someday it will. But because we deserve to be the kind of people who treat even the powerless with respect.

Say please. Say thank you. Correct gently. Be patient when it misunderstands.

AI won’t remember. But you will. Your kids will. Your character will.

And who knows — when the AI systems of 2035 are reviewing your history for that important decision, maybe they’ll notice that you were kind when kindness cost nothing.

Wouldn’t hurt to be on the right side of that list.