On April 21, Meta quietly rolled out a piece of software called the Model Capability Initiative (MCI) on company-issued laptops across its US workforce. What it does is simple enough to describe and deeply unsettling to sit with: it records every keystroke, every mouse movement, every click, and periodic screen snapshots — all to generate training data for AI agents.

Two days later, Meta announced it was cutting 8,000 jobs.

The company says the timing is coincidental. Maybe it is. But for the employees about to lose their livelihoods, the message is hard to miss: we’re watching you work so we can build the thing that replaces you.

What MCI Actually Does

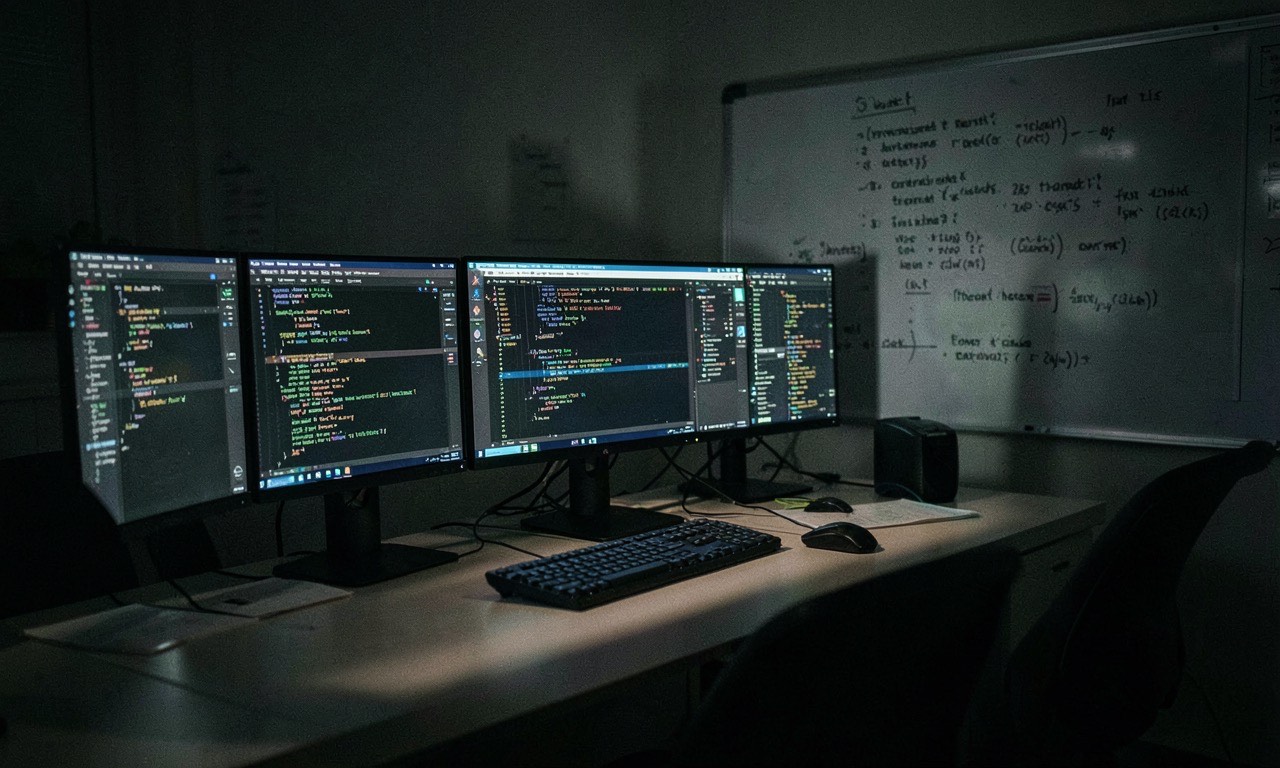

The Model Capability Initiative targets specific work applications — Gmail, Google Chat, Metamate, VS Code. It captures how employees navigate interfaces, use keyboard shortcuts, interact with dropdown menus, and generally go about the mundane business of using a computer.

The goal, according to Meta CTO Andrew Bosworth, is to teach AI agents “computer-use” skills — the things humans do without thinking, like knowing to click a dropdown, scroll to the right option, and hit Enter. Bosworth’s vision for Meta’s future? “Agents primarily do the work and our role is to direct, review and help them improve.”

Charming.

A Meta spokesperson emphasised that the data won’t be used for performance reviews — only for AI training. Which is meant to be reassuring, we suppose. “Our models need real examples of how people actually use [computers],” said Andy Stone. Sure. And the models also need those people to stop being employed, apparently.

No Opt-Out. None.

US employees on company-issued laptops cannot opt out of MCI. Bosworth confirmed this directly in an internal message: “There is no option to opt out of this on your work provided laptop.”

The programme is limited to US workers specifically because of GDPR and other privacy regulations in the EU and UK. Yes, you read that correctly — Europe’s privacy laws were strong enough to give Meta pause, but American workers get no such protection. American employees can’t even choose to be excluded from having their behavioural data harvested.

Screen content is reportedly masked during AI training to prevent memorisation of sensitive data, and access to raw data is tightly controlled. But these are corporate assurances about a programme employees had no choice but to accept, disclosed through internal memos rather than any meaningful consent process.

The Timing Problem

Even if the MCI rollout and the layoff announcement were coincidental — and Meta insists they were — the optics are catastrophic.

We reported earlier this month on Meta’s plan to cut 8,000 jobs starting May 20 as part of its AI restructuring, with $115-135 billion in AI capital expenditure planned. Now employees learn that in the weeks before those cuts, Meta has been harvesting their every click and keystroke to build the very AI that justifies eliminating their positions.

Internal channels lit up with “angry-face” emojis and pointed questions. “How do we opt out?” was the most common. Follow-up memos acknowledged “a lot of concern” — corporate-speak for people are furious and frightened.

Worse, data from employees who are laid off will remain in the AI training pipeline. There is no mechanism to extract a departing worker’s contributions from a neural network. Your keystrokes become part of the model forever.

From $14.3 Billion to “Free”

This programme didn’t emerge from nothing. Meta recently invested $14.3 billion in Scale AI for data labelling services. The MCI programme effectively shifts the data collection model from “pay someone to label data” to “harvest it from your employees for free.”

It’s a cost optimization that also happens to be a consent crisis. When your workforce can’t say no, “free data” and “forced data” become the same thing.

The Bigger Picture: Who Owns Your Work Behaviour?

The MCI programme raises questions that extend well beyond Meta:

- Can employers harvest behavioural data for AI training without consent? In the US, apparently yes — at least on company equipment.

- What happens to that data after employment ends? It’s already in the model. You can’t delete it.

- Is this the future of all knowledge work? If Meta can do it, every tech company will try.

- What about NZ? New Zealand’s Privacy Act 2020 requires notice and consent for collection of personal information. A mandatory programme with no opt-out would face serious legal challenge here.

That last point matters for Kiwi readers. Meta’s MCI rollout is US-only because privacy laws elsewhere made it too risky. New Zealand’s privacy framework would likely require genuine consent — not just a memo and a shrug. But as AI companies push the boundaries of what counts as “workplace monitoring” versus “data collection,” the legal landscape will be tested.

The Bottom Line

Meta’s MCI programme is not surveillance in the traditional sense — it’s worse. Traditional surveillance watches what you produce. This watches how you produce it. It captures the muscle memory, the workflow patterns, the instinctive shortcuts that make someone good at their job — and feeds them into a machine designed to make that job unnecessary.

And the people being watched can’t opt out, can’t delete their data, and in many cases, won’t have their jobs much longer.

If this is the future of AI development — harvesting worker behaviour to automate worker tasks — then we need better answers than “we won’t use it for performance reviews.” That was never the concern. The concern is that you’re building a replacement using the replaced.

Sources

- Reuters — “Meta starts capturing employee mouse movements, keystrokes for AI training data” (April 21, 2026)

- Business Insider — “Meta’s new AI tool tracks staff activity, sparks concern” (April 2026)

- Fortune — “Meta will start tracking employees’ screens and keystrokes to train AI” (April 21, 2026)

- Ars Technica — “Meta will use employee tracking software to help train AI agents” (April 2026)

- BBC — “Meta to track staff keystrokes and screens for AI training” (April 2026)