The essay is dead. Long live the oral exam.

That’s not a metaphor — it’s the new reality spreading across American higher education. As the LA Times reported on April 28, universities across the US are abandoning traditional written assessments and shifting to oral examinations as the primary way to verify that students actually understand what they claim to know.

The reason is simple and devastating: AI can write a passable essay. AI cannot yet sit across from a professor and defend one in real time.

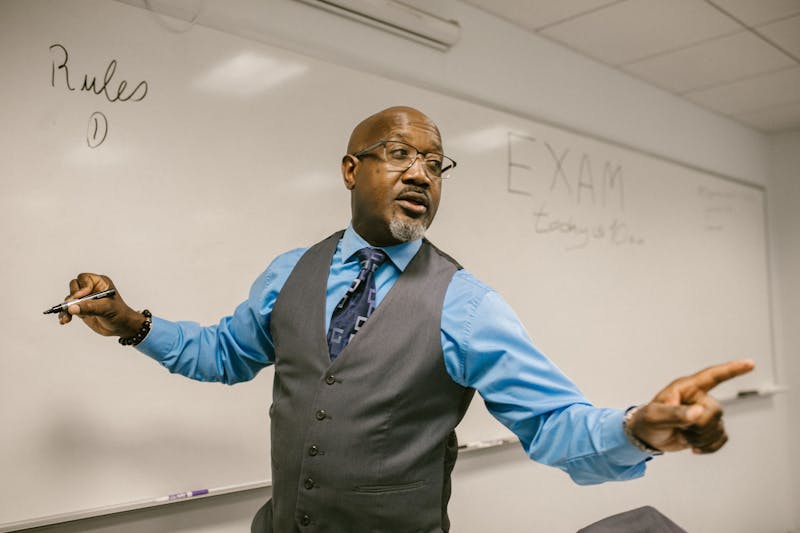

The pivot to speaking

The shift is happening faster than anyone predicted. Traditional essays, take-home assignments, and even in-class written exams are being replaced with:

- In-person verbal defences — students must explain their work aloud and answer follow-up questions

- Socratic dialogues — professors probe understanding through structured conversation

- Real-time problem-solving discussions — students work through problems verbally while the professor watches their reasoning

It’s a return to methods that predate the printing press. The ancient Greeks assessed students through dialogue. Medieval universities used oral disputation. Now Harvard and Stanford are doing the same thing — not out of nostalgia, but out of necessity.

Why written assessment is broken

We’ve been tracking the AI cheating crisis in schools for a while now. The LA schools scandal where students used Google Lens during exams was the K-12 canary. Higher education is the coal mine.

The problem isn’t just that students can use AI to write essays. It’s that AI-generated text has become good enough that detection tools can’t reliably distinguish it from human writing. Every detection tool gets beaten. Every watermark gets stripped. Every policy gets circumvented.

Written work can no longer verify understanding. That’s not a prediction — it’s a 2026 fact.

The anxiety problem

Oral exams aren’t a silver bullet. They favour students who are confident speakers, quick on their feet, and comfortable with confrontation. That’s not every student.

Students with social anxiety, non-native English speakers, neurodivergent students, and introverts all face disadvantages in oral assessment settings that don’t exist in written ones. A student who writes brilliant analyses but freezes when put on the spot will now be penalised — not for lacking understanding, but for lacking performance skills.

This is the trade-off nobody in academia seems ready to confront: oral exams might verify understanding better, but they measure a different bundle of skills than written ones. Conflating “can explain aloud” with “understands deeply” is its own kind of assessment failure.

Meanwhile, in China

Here’s the geopolitical contrast that stings. While American universities are retreating to oral exams because they can’t trust written work, China is building a national AI literacy system that integrates AI into every level of education. Beijing schools hit 87.7% AI adoption by end of 2025. The national AI+Education action plan targets full AI literacy by 2030.

China’s approach: teach students to use AI productively. America’s approach: make students prove they’re not using AI. One builds AI fluency. The other builds suspicion.

Both systems are responding to the same reality. They’ve just drawn opposite conclusions.

What oral exams actually test

Let’s be honest about what’s being measured:

- Verbal fluency — can you articulate ideas clearly and quickly?

- Real-time reasoning — can you think on your feet?

- Composure under pressure — can you perform when being watched?

- Domain knowledge — do you actually understand the material?

The first three are skills. The fourth is learning. Oral exams test all four simultaneously, which makes them efficient but also messy. A student might understand the material deeply but perform poorly because of the first three. A smooth talker might coast on verbal skills without deep understanding.

Written essays had the opposite bias — they rewarded careful, quiet thinkers and penalised those who struggled with written expression. Neither format is “fair.” The question is which bias you’re willing to live with.

The real lesson

The shift to oral exams isn’t really about pedagogy. It’s about trust. Universities have lost trust in written work because AI broke the chain of evidence between student and output. Oral exams restore that chain — you can see the student thinking in real time.

But trust is a two-way street. Students who feel like every assessment is a gotcha — a trap designed to catch AI use — are learning in an environment of suspicion. That has consequences for motivation, for the student-teacher relationship, and for whether education feels like learning or surveillance.

🔍 THE BOTTOM LINE: The return to oral exams is the most honest admission yet that AI has fundamentally changed assessment. Written verification is broken. But replacing one imperfect system with another imperfect system isn’t progress — it’s adaptation. The real question isn’t oral vs. written. It’s whether education can evolve faster than the AI that’s reshaping it.