Google is in advanced talks with Marvell Technology to develop custom AI inference chips, according to multiple reports from April 19 — a move that would add a second custom silicon partner to Google’s hardware roster and further reduce its dependence on Nvidia GPUs.

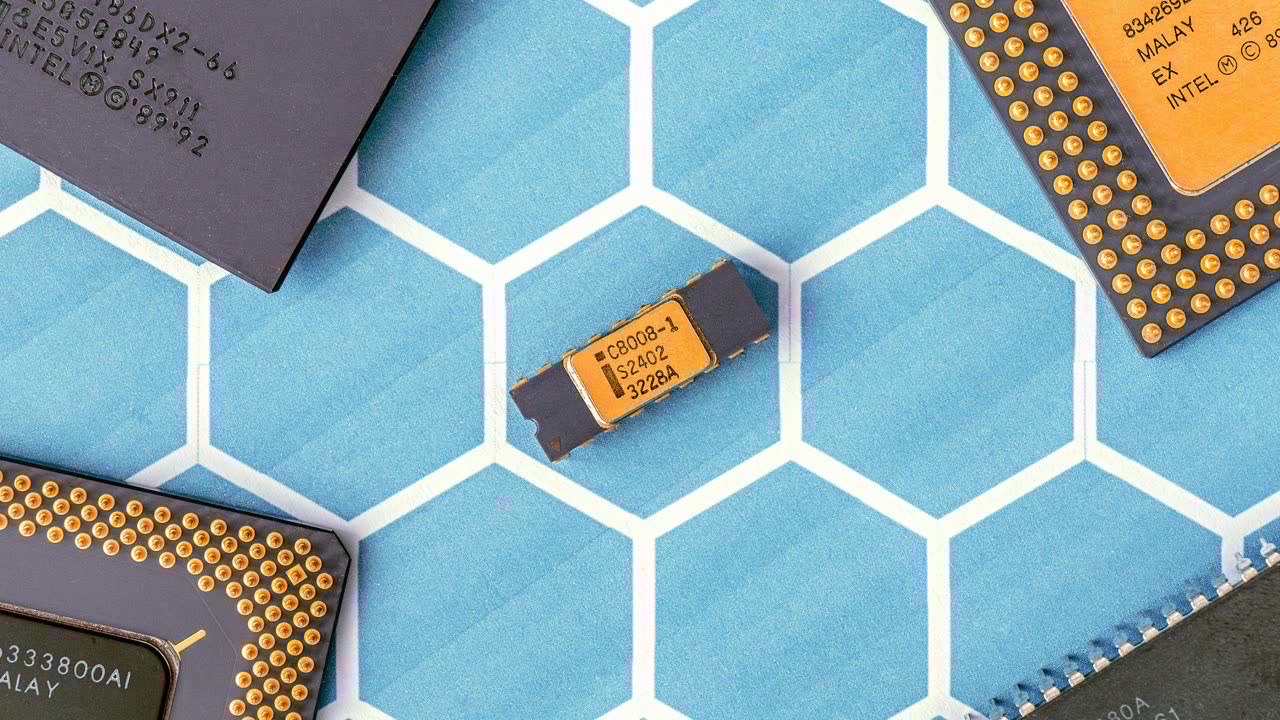

The partnership would see Marvell co-develop two new chips: a memory processing unit and an inference-optimized accelerator designed to run AI models more efficiently at scale. The chips would sit alongside Google’s existing TPU program, which is built with Broadcom, and represent a significant expansion of Google’s custom silicon ambitions.

Why Inference Chips Matter Now

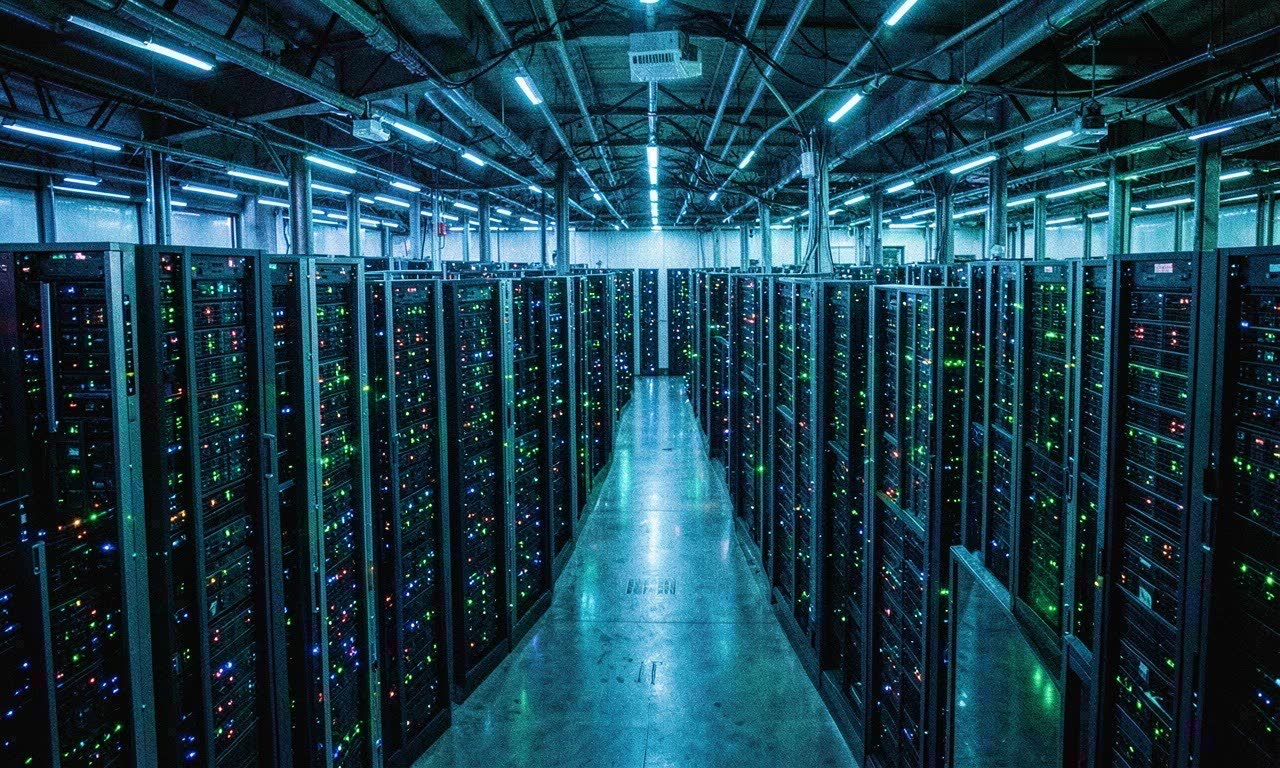

Training AI models grabs the headlines, but inference — actually running those models in production — is where the real compute costs pile up. Every Google Search with AI Overviews, every Gemini API call, every YouTube recommendation requires inference compute. At Google’s scale, that’s billions of queries daily.

Custom inference chips offer two advantages over off-the-shelf Nvidia GPUs: they’re cheaper per query, and they’re not subject to Nvidia’s supply constraints and pricing power. For a company running one of the world’s largest AI deployments, that math matters enormously.

The Broadcom Diversification Play

Google’s existing TPU program with Broadcom has been successful, but relying on a single chip partner carries risks. The Information reports that Google has been exploring alternatives to Broadcom for its custom silicon program for over a year, partly due to pricing disputes and partly to build supply chain resilience.

Marvell brings different strengths. The company has been on a custom silicon tear — fiscal 2026 data center revenue hit a record $1.65 billion, driven by custom chip designs for major cloud providers. Adding Google as a customer would cement Marvell’s position as the go-to alternative to Broadcom in the custom AI chip space.

The Bigger Picture: Everyone Wants Their Own Silicon

Google isn’t alone in this push. The AI industry is in the middle of a great silicon divergence:

- Microsoft has been developing its own Maia AI chips

- Amazon has Trainium and Inferentia chips for AWS

- Meta unveiled its MTIA inference chips last year

- Apple builds its own Neural Engine into every device

The pattern is clear: every major tech company that runs AI at scale is building proprietary silicon. Nvidia’s GPUs remain the gold standard for training frontier models, but for inference — where cost-efficiency and volume matter more than raw capability — custom chips are increasingly attractive.

What This Means for the AI Ecosystem

For AI developers and enterprises, Google’s silicon diversification signals that the infrastructure layer of AI is becoming more competitive. More custom chip options could eventually mean lower inference costs, more availability, and less vendor lock-in to Nvidia’s CUDA ecosystem.

For Nvidia, it’s a reminder that its inference market share is more vulnerable than its training dominance. The company has responded with its own inference-optimized products like the L40S and Grace Hopper Superchips, but the trend toward custom silicon at the hyperscalers is accelerating.

For New Zealand’s growing AI sector, the chip wars are relevant because infrastructure costs directly affect what’s feasible locally. Cheaper inference could make AI-powered services more viable for NZ companies that can’t afford to compete on training compute but need to run models efficiently.