Google Cloud and NVIDIA have launched A5X, a rack-scale AI infrastructure system built on NVIDIA’s next-generation Vera Rubin NVL72 hardware. It scales to 960,000 GPUs in a single cluster. That’s not a typo.

🔍 THE BOTTOM LINE: The scale of AI infrastructure is now in a tier that only a handful of companies can reach. Google, Microsoft, and Amazon are building AI factories. Everyone else is renting time on them.

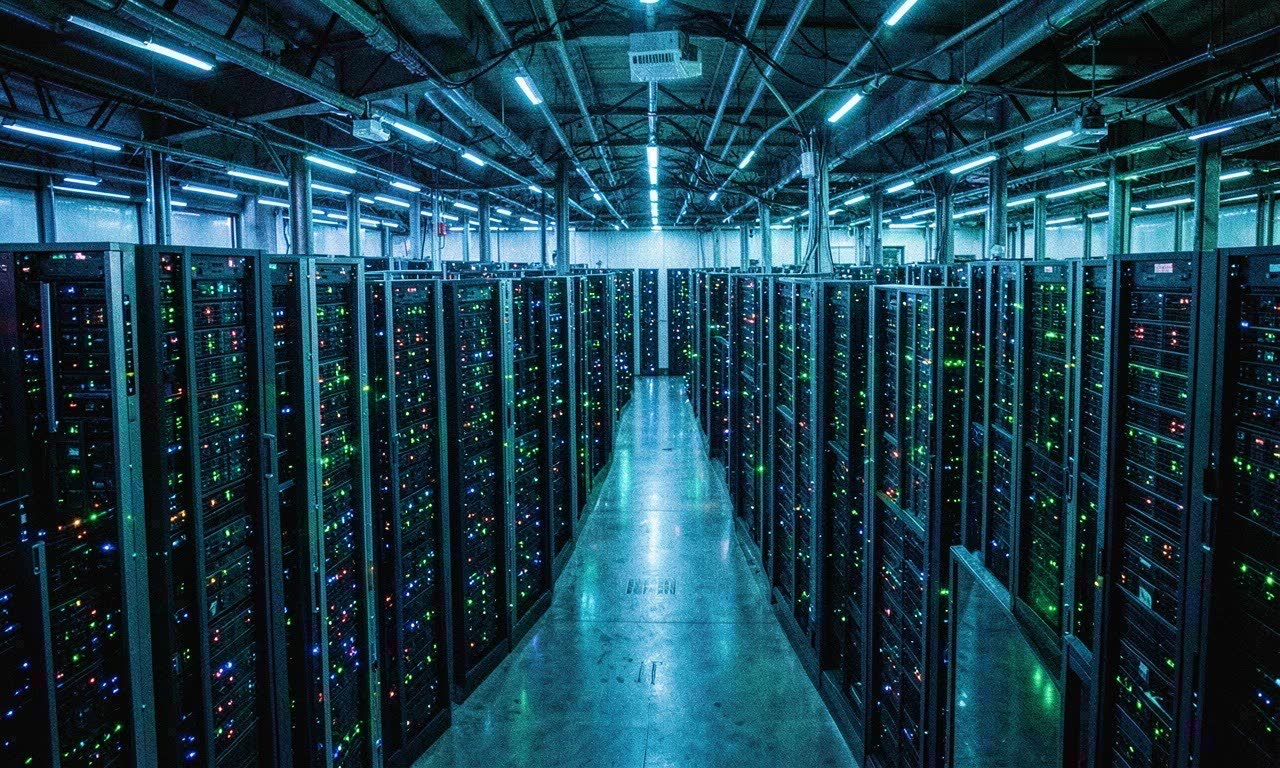

🏭 What A5X Actually Is

A5X is Google Cloud’s next-generation AI infrastructure offering, announced at Google Cloud Next 2026. It’s built on:

- NVIDIA Vera Rubin NVL72 — The successor to Blackwell, with 336 billion transistors per GPU

- 288 GB HBM4 per GPU — 22 TB/s memory bandwidth per chip

- 50 petaFLOPS of FP4 inference per GPU

- ConnectX-8 SuperNICs — 800 Gb/s networking per node

- Liquid cooling — Required at this density

A single A5X rack has 72 Rubin GPUs. The full cluster scales to 13,333 racks — 960,000 GPUs total.

To put that in perspective: GPT-4 was trained on approximately 25,000 A100 GPUs. A5X gives you 38× that capacity in a single system.

💰 What It Costs

Google and NVIDIA haven’t published pricing for A5X time. But based on current GPU cloud rates:

- NVIDIA H100: ~$2-3/hour per GPU on-demand

- NVIDIA Blackwell B200: ~$4-6/hour per GPU estimated

- NVIDIA Rubin: Likely $8-12/hour per GPU at launch

At $10/hour per GPU, running the full 960K cluster for one hour would cost approximately $9.6 million.

This is infrastructure for training frontier models, not for inference or startups. The customers are other hyperscalers, national AI programmes, and the handful of companies building foundation models.

🌐 The Three-Company Problem

The scale of A5X highlights a growing concentration in AI infrastructure:

| Company | GPU Fleet (estimated) | Infrastructure Tier |

|---|---|---|

| 960K+ (A5X) | Factory | |

| Microsoft | 400K+ (Blackwell) | Factory |

| Amazon | 300K+ (Trn2/Ultra) | Factory |

| Meta | 350K+ (Blackwell) | Factory |

| Everyone else | <50K | Tenant |

Three to four companies control the infrastructure layer. Everyone else — including most AI companies — rents compute from them. Your startup’s model runs on Google’s or Microsoft’s hardware. Your inference happens on their chips. Your training happens in their data centres.

This isn’t just a cost problem. It’s a dependency problem.

🇳🇿 NZ Relevance

New Zealand has no GPU factories. No domestic chip manufacturing. No hyperscaler data centres running Rubin clusters.

For NZ:

- AI startups will continue relying on US cloud providers for training

- Research institutions face ever-widening compute gaps

- Sovereign AI discussions are about to become more urgent — if only three companies can afford to train frontier models, what does that mean for national AI capability?

- The pricing guide at singularity.kiwi tracks what’s available for local inference, but frontier model training is increasingly out of reach for anyone not sitting on billions in compute budget

🔮 What’s Next

NVIDIA’s Rubin Ultra (the dual-Rubin configuration) is expected later in 2026, pushing density even higher. Google has already signalled that A5X is the first of multiple Rubin-based offerings.

The trajectory is clear: AI infrastructure is consolidating, the scale is accelerating, and the gap between what’s possible and what’s affordable is widening.