Minnesota is barrelling toward a decision that could reshape how AI monitors children in American schools. Lawmakers are advancing a $4 million pilot program that would place AI-driven surveillance technology in school districts across the state — and the debate over whether this makes students safer or simply more watched is intensifying by the day.

What the Bill Proposes

The legislation, authored by Representative Ben Bakeberg, would allocate approximately $4 million to the Minnesota Department of Public Safety to implement a school safety “threat assessment” pilot. The program would deploy AI surveillance systems in one school district per congressional district — eight districts total.

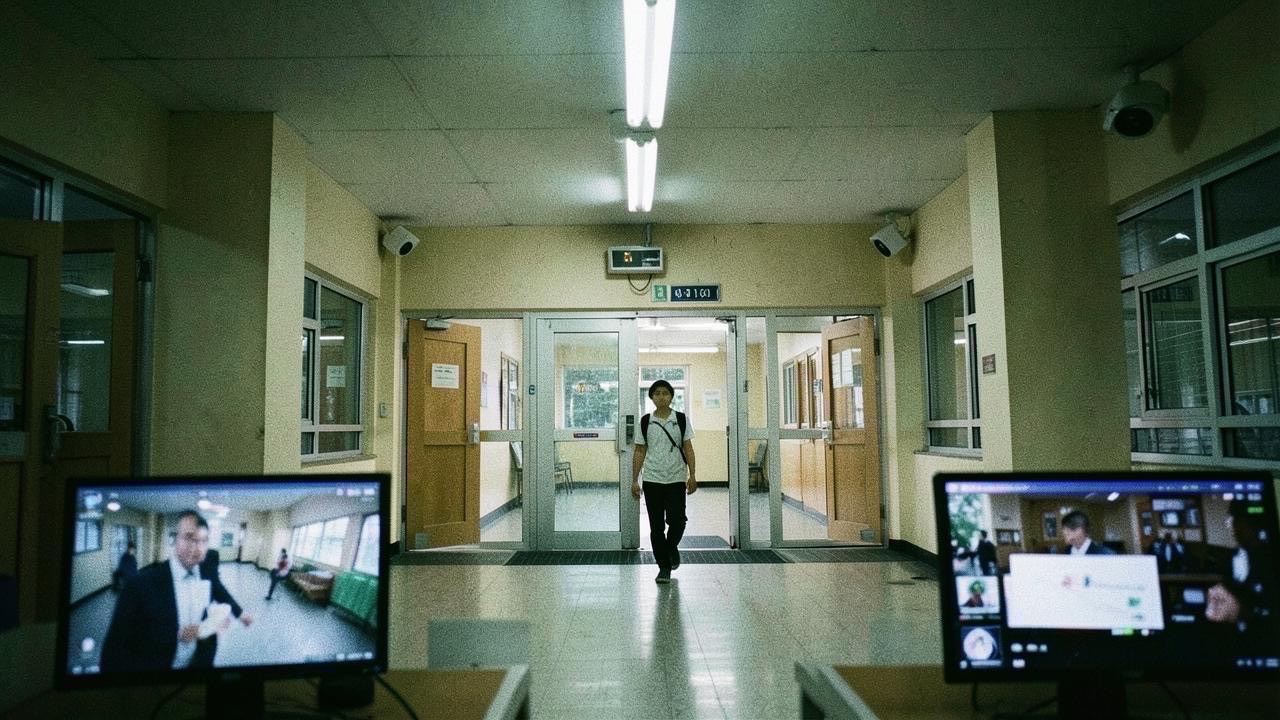

The technology combines cameras, radar-based sensors, and AI software designed to detect weapons including firearms and knives, even when concealed in backpacks or clothing. When a potential threat is identified, the system generates real-time alerts to school officials and, in some cases, emergency responders.

Supporters frame it as a proactive tool: stop threats at school entrances and exterior spaces before they reach classrooms. The bill remains in committee and has not yet received a final vote.

The Privacy Backlash

The ACLU of Minnesota has come out forcefully against the proposal, arguing that increased surveillance does not necessarily improve safety and could disproportionately affect certain student populations.

The core concerns are familiar but no less urgent:

-

Algorithmic bias. AI surveillance systems have a well-documented history of higher false positive rates for people of color and individuals with disabilities. In a school setting, that means students who are already marginalized bear the brunt of misidentification.

-

Data collection without guardrails. The bill does not yet include detailed provisions for what happens to the data these systems collect, how long it is retained, or who has access to it.

-

Effectiveness is unproven. Testimony before lawmakers highlighted a striking gap: there is insufficient independent evidence that AI-based surveillance systems actually reduce school violence. The technology is being sold on promise, not proof.

Critics argue the same funding would deliver more measurable safety gains if directed toward mental health services, counseling, and violence prevention programs — interventions with decades of evidence behind them.

The National Stakes

Minnesota is not operating in a vacuum. If this pilot proceeds and is later expanded, other states will take note. The United States has been here before with school surveillance: metal detectors, armed resource officers, and extensive camera systems have proliferated in the decades since Columbine, with mixed evidence that any of them meaningfully reduce the likelihood of mass violence.

What makes AI surveillance different is its scale and opacity. Traditional cameras record what happened. AI systems interpret behavior, flag threats, and make decisions about which students warrant intervention — all without the student or their family understanding the criteria. That is a fundamentally different relationship between institutions and the people they serve, and it is being deployed on minors.

What Happens Next

The bill is still working through committee. Participating districts would be selected statewide, and schools already using comparable systems would be excluded. The legislation calls for a formal evaluation of the program’s effectiveness, with findings intended to inform future decisions about broader implementation.

But the evaluation happens after the deployment. By then, eight school districts will have installed AI surveillance infrastructure, trained staff to rely on its alerts, and normalized a new layer of monitoring in school environments. Rolling that back is far harder than preventing it from expanding in the first place.

For Minnesota’s students, the question is not whether schools should be safe. Everyone agrees on that. The question is whether surveillance AI actually delivers that safety — or simply delivers surveillance.

SOURCES

- MinneapoliMedia — Minnesota Lawmakers Advance AI School Surveillance Pilot, Raising Safety and Privacy Debate