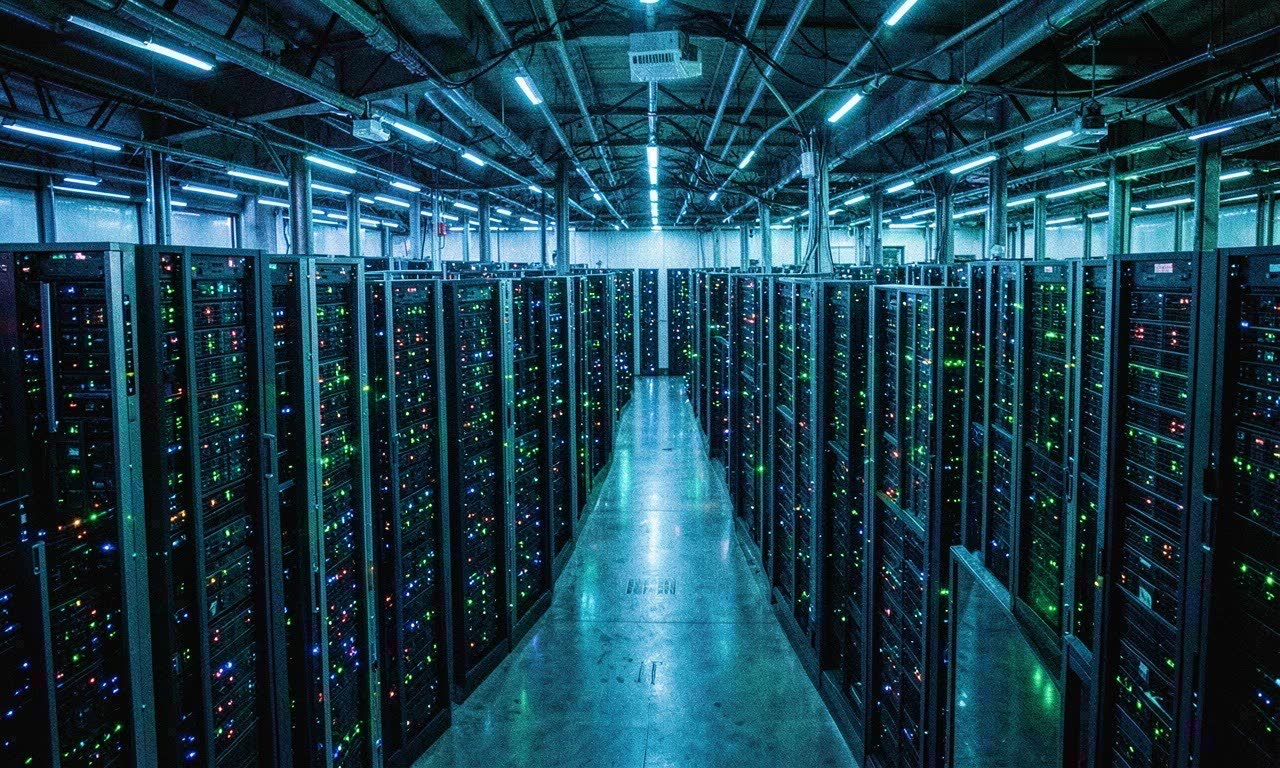

The largest orbital compute cluster ever built is now operational, and it represents something more than a technical milestone: a fundamental shift in where AI infrastructure can live.

TechCrunch reports the cluster offers space-based AI training infrastructure with improved cooling and solar power efficiency — two constraints that are increasingly strangling terrestrial data center expansion. In orbit, vacuum provides natural cooling, and unobstructed solar access delivers consistent power without the grid bottlenecks that plague Earth-based facilities.

Why Orbit Matters for AI

The timing isn’t accidental. Half of planned US data centers have been delayed or canceled in 2026 due to power constraints. Utilities can’t keep up. Communities push back. The energy demands of training frontier models now rival small nations.

Orbital compute sidesteps those limits:

- Cooling: No atmosphere means no air, but also no need for massive liquid cooling systems. Radiative cooling in vacuum is dramatically more efficient.

- Power: Continuous solar exposure without weather, clouds, or night cycles — depending on orbit — provides stable, abundant energy.

- Regulation: Space is, for now, a regulatory gray zone. No local zoning boards. No community opposition.

The cluster isn’t a concept. It’s live, accepting workloads, and reportedly handling large-scale training runs already.

The Terrestrial Crisis Driving Interest

This isn’t just about innovation for its own sake. The ground is literally running out of room.

Data center power consumption in the US grew 20% year-over-year in 2025. Utilities from Virginia to Oregon have told tech companies they can’t supply more power until 2028 at the earliest. Google, Microsoft, and Amazon have each secured nuclear power agreements — a sign of how desperate the situation has become.

When the largest tech companies on Earth are negotiating for atomic energy to train AI models, the idea of putting those models in orbit starts to look less like science fiction and more like infrastructure planning.

What This Means for AGI Timelines

If compute is the bottleneck for AGI development — and most researchers believe it is — then removing the power and cooling constraints that limit terrestrial data centers could accelerate training timelines. The orbital cluster doesn’t add intelligence directly, but it removes friction from the scaling pipeline.

It also raises questions that haven’t been answered:

- Who regulates AI training in orbit? No nation’s laws clearly apply.

- What happens when the hardware degrades or becomes obsolete? Orbital debris is already a crisis.

- Could orbital compute create a two-tier system — companies with space access versus those bound to terrestrial grids?

For now, the cluster is operational and small-scale relative to the hyperscaler data centers on Earth. But it’s a proof of concept that works. And in the race toward AGI, proof of concepts have a way of scaling fast.